Most advice on how to improve customer experience strategy starts in the wrong place. It starts with delight, personalization, or a new layer of AI. In large contact centers, that is usually how teams create another disconnected initiative.

CX programs rarely fail because leaders do not care. They fail because the work is not run like operations. There is no hard baseline, no sequencing logic, no ownership across channels, and no governance after launch. The result is predictable. A strategy deck gets approved, a platform gets bought, and the customer journey still breaks at the same handoff points.

That gap is getting worse, not better. According to Forrester’s 2025 Global Customer Experience Index, 21% of brands declined in customer experience quality while only 6% improved, and in the US 25% of brands declined versus 7% that improved (Forrester). That is not a creativity problem. It is an execution problem.

If you run a contact center, you do not need another list of broad principles. You need a method that survives procurement, implementation, compliance review, and frontline reality. That is the standard I use when I look at a CX overhaul. The strategy has to be measurable, fundable, and durable. If it cannot make it through those tests, it is not a strategy.

Why Most Customer Experience Strategies Fail

The most common mistake is treating CX like a brand initiative instead of an operating model. Leaders approve a vision, launch a listening program, maybe add another digital channel, then wonder why customers still repeat themselves and agents still bounce between systems.

The issue is usually not intent. The issue is that no one built the operational spine behind the experience.

Three failure patterns show up again and again:

- The strategy is too abstract. Teams define outcomes like “be easier to do business with” but never translate them into routing rules, knowledge design, QA criteria, callback logic, or escalation ownership.

- The roadmap is driven by tools. A vendor demo becomes the strategy. The work starts with features instead of journey friction.

- No one owns the cross-functional breaks. Contact center, digital, back office, compliance, IT, and product each optimize their own piece. Customers experience the seams.

Many organizations also overinvest in survey collection and underinvest in process correction. They can tell you the score dropped. They cannot tell you which transfer path, policy, or knowledge gap caused it.

Practical rule: If your CX plan does not identify who changes the workflow, who funds the fix, and how the impact will be measured, it is still a concept.

I also push back on the idea that the goal is to “wow” every customer. In enterprise service environments, customers usually want competence, continuity, and low effort. They want the right answer, the full context, and no rework.

That requires discipline. It also requires leaders to keep up with how the operating environment is changing. A useful read on that broader shift is The tech world is shifting fast. Here’s what CX leaders need to know.

Diagnose Your Current State with Precision

Shallow CX assessments waste time. Reviewing a dashboard, pulling survey comments, and holding two stakeholder meetings usually produces the same result: a vague list of complaints with no clear owner, no operational root cause, and no usable business case for change.

A real diagnostic has to explain three things. Where the journey breaks, what inside the operation causes the break, and which constraints will block the fix if you ignore them.

Start with the journey, not the org chart

Customers experience a resolution path, not your reporting lines.

I map the highest-volume and highest-risk journeys end to end first. In an enterprise contact center, that usually means the journeys that drive repeat contacts, complaints, regulatory exposure, or heavy back-office dependency. Billing disputes, claims status, account access, fraud alerts, onboarding, service changes, and prior authorization questions are common starting points.

Map the journey at the level where operations can act on it. High-level swim lanes are not enough. Capture:

- Entry points: IVR, chat, portal, mobile app, email, branch, agent

- Handoffs: bot to agent, agent to tier 2, front office to back office, digital to phone, service to sales

- Decision points: authentication, disclosures, policy exceptions, approval rules, eligibility checks

- Failure points: repeat verification, unresolved cases, dead ends, transfers, missing context, unclear status

That work often exposes a pattern executives miss. The same friction point usually hurts both sides of the interaction. If the customer has to repeat information, the agent is also working without context. If the customer cannot see case status, the agent absorbs the follow-up volume.

Pull data from systems that reflect the work

One platform never gives you the full picture. The phone platform shows routing and wait patterns. The CRM shows continuity problems. Back-office systems show where work sits. QA and speech tools show whether the issue is coaching, policy, or process design.

This is the minimum stack I review:

| System | What to look for | Why it matters |

|---|---|---|

| CCaaS platform | Transfer patterns, repeat contacts, queue exits, callback usage, containment paths | Shows where routing or channel design is failing |

| CRM | Case reopen reasons, note quality, follow-up delays, next-best-action usage | Exposes broken continuity and weak case ownership |

| WFM and scheduling | Occupancy pressure, adherence trends, intraday mismatch | Shows whether staffing choices are creating avoidable friction |

| QA and speech analytics | Failure themes, compliance risk, dead air, policy confusion, empathy gaps | Separates training issues from process defects |

| Knowledge base | Search failures, article age, duplicate content, abandonment after view | Shows whether self-service and agent assist can be trusted |

| Back-office systems | Queue aging, touch count, approvals, exception handling | Reveals the hidden delays customers feel and agents cannot fix |

I also look for system conflicts, not just system metrics. A journey may look acceptable inside the CCaaS dashboard while failing badly across the full process because the case leaves the front office and disappears into a manual review queue. If you miss that handoff, you diagnose the symptom and fund the wrong fix.

Add customer and agent evidence

Operational data narrows the search. Interviews and observation explain why the failure survives quarter after quarter.

I use customer interviews, supervisor interviews, agent focus groups, and side-by-side observation of live work. Ask for specific moments and workarounds. General opinions are less useful than concrete failure sequences.

Good prompts include:

- What made you switch channels?

- At what point did you stop trusting the process?

- Which steps require agents to leave the main system?

- What can the digital channel not handle cleanly today?

- Where do agents rely on tribal knowledge instead of documented guidance?

- Which policy or approval step creates the most avoidable follow-up?

Agents are usually the fastest path to the truth. They see the policy conflict, the broken integration, the bad knowledge article, and the customer reaction in one interaction.

Customer evidence matters too, especially when you are designing a simple, secure digital customer journey. Security controls, authentication flows, and channel switching rules often look reasonable to internal teams and still create unnecessary effort for customers.

Separate defects from preferences

Many diagnostics go off course when defects are mixed with preferences. Teams mix hard defects with feature requests and call them one backlog.

Keep them separate.

I sort findings into four buckets:

Friction the customer feels immediately

Long transfers, repeated authentication, weak self-service, poor status visibility, disconnected channels.

Friction the agent absorbs

System toggling, weak knowledge design, unclear policy interpretation, poor desktop layout, missing case context.

Friction created by operating model choices

Split ownership, narrow queues, back-office bottlenecks, rigid scripts, escalation rules that slow resolution.

Friction tied to technology design

Bad integrations, partial CRM views, analytics with no action path, AI copilots that do not fit the workflow.

That structure matters because the fix path is different for each category. A policy issue needs a policy owner. A desktop issue needs product, IT, and operations. A queue design issue may need no new technology at all. I have seen teams spend seven figures on platform changes when a routing rule, case ownership model, and knowledge rewrite would have solved the problem faster.

Test whether the organization can execute the fix

A diagnosis is incomplete if it stops at pain points. You also need to know whether the organization can carry the change through procurement, delivery, and adoption.

I check for four execution constraints early:

- Vendor readiness: Can current vendors support the required workflow, integration, reporting, and implementation timeline?

- Data readiness: Do teams trust the definitions, IDs, and cross-system mapping needed to measure the journey?

- Decision rights: Who can approve policy, funding, workflow changes, and channel changes across functions?

- Change capacity: Does the operation have enough training, QA, WFM, and frontline leadership bandwidth to absorb the change?

This is why I prefer a formal enterprise CX roadmap assessment and planning approach over a light discovery exercise. Without that discipline, organizations identify valid problems and still fail because no one tested delivery constraints early enough.

By the end of this step, you should have more than a pain-point inventory. You should have evidence-backed defect patterns tied to specific journeys, teams, systems, handoffs, and execution risks. That is the standard required before you ask finance, IT, procurement, or operations to fund the next move.

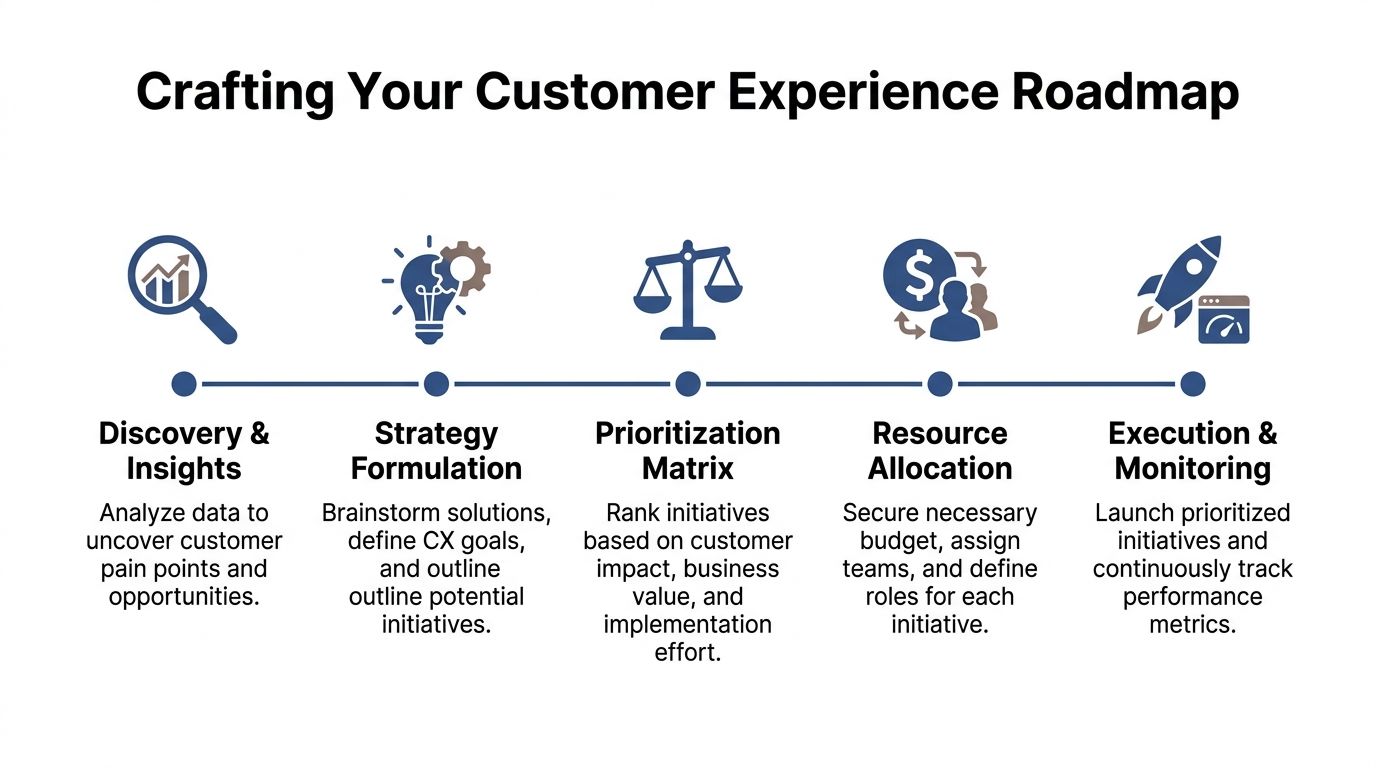

Build a Prioritized and Defensible CX Roadmap

The roadmap is where CX strategy usually breaks down.

Enterprise contact centers rarely fail because they lack ideas. They fail because the roadmap mixes small repairs, policy disputes, system requests, and vendor assumptions into one undifferentiated backlog. Then every stakeholder reads it differently. Operations sees queue fixes. IT sees integration work. Finance sees a cost request without a payback path. Procurement sees a buying event that started before requirements were stable.

A defensible roadmap fixes that by forcing each initiative to earn its place.

Rank work by operational value, not visibility

I do not prioritize based on customer pain alone. In a contact center, a painful issue that touches 2 percent of volume may deserve less attention than a mediocre journey that creates repeat contacts, escalations, and avoidable handling time all day.

I score initiatives across four dimensions:

| Screen | Question |

|---|---|

| Customer effect | Does this remove friction from a high-volume, high-value, or high-risk journey? |

| Operational effect | Does it reduce repeat contacts, transfers, rework, handle time, or supervisor intervention? |

| Delivery complexity | Does it require policy change, legal review, integration work, retraining, or union consultation? |

| Dependency risk | Is it blocked by another workstream, data cleanup, vendor capability, or funding approval? |

That scoring changes the conversation fast. A mobile app enhancement may look attractive in a steering committee, but a routing redesign, ownership change, and knowledge rewrite often produce a larger improvement in both experience and cost to serve.

Build the business case at the initiative level

Roadmaps get funded when each item is tied to a business outcome that matters outside the service organization.

That means every initiative should answer five questions before it goes to executive review:

- Which journey or contact reason does this fix?

- Which operating defect does it remove?

- Which business metric should move if the fix works?

- Which teams must approve or deliver it?

- How will success be measured beyond CSAT or NPS?

If the owner cannot answer those five points cleanly, the item is still a concept, not a roadmap entry.

I push teams to quantify the effect in terms leaders already use. Retention risk. Cost to serve. Revenue leakage. Compliance exposure. Agent productivity. A broken authentication flow, for example, is rarely just a channel issue. It affects abandon rates, transfers, containment, handle time, fraud controls, and customer trust at the same time.

Separate repairs from structural change

One of the fastest ways to lose credibility is to present a roadmap where every item looks equally urgent.

They are not.

I usually sort the portfolio into four lanes:

- Immediate operational fixes: queue logic, callback rules, form simplification, knowledge cleanup, agent guidance

- Cross-functional service redesigns: case ownership, complaint handling, exception management, status visibility

- Platform and data changes: CRM integration, desktop redesign, reporting model changes, identity orchestration, workflow tooling

- Policy and governance changes: decision rights, approval paths, escalation thresholds, audit checkpoints, VOC accountability

This prevents a common failure mode. Teams deliver a set of quick wins, declare momentum, and avoid the harder work that changes the experience at scale. Quick repairs matter. They also expire fast if the underlying workflow, data model, or policy stays broken.

Make every major initiative procurement-ready

A roadmap for an enterprise contact center has to survive more than an operations review. It has to survive architecture review, security review, finance scrutiny, and vendor challenge.

For that reason, I prefer a one-page brief for every major item. Each brief should include the current-state defect, target workflow, impacted systems, required approvals, implementation dependencies, delivery risks, and a success measure with an owner. That format exposes weak thinking early. It also prevents the handoff problem where operations approves an initiative that procurement and IT later redefine.

At this stage, digital and contact center work also need to stay connected. Teams that separate them usually create a cleaner front-end journey and a messier assisted-service flow. The article on designing a simple, secure digital customer journey is a useful companion because it addresses a trade-off I see often. Simplicity and control have to be designed together, not negotiated after requirements are locked.

For larger programs, I have used a formal enterprise CX roadmap planning process to pressure-test sequencing, ownership, and procurement exposure before the roadmap goes to funding.

What belongs off the roadmap

Some items should be removed, not deferred.

I cut work that depends on undefined policy decisions, vague AI use cases, or a platform purchase that has not passed workflow validation. I also cut initiatives that have no named owner outside the CX team. If no one in product, risk, IT, finance, or operations will co-own the result, the item usually stalls after approval.

A strong roadmap shows sequence, trade-offs, and proof. It does not promise that every customer problem gets solved in one wave, and it does not treat launch as the finish line.

Evaluate Technology and Vendor Readiness

Enterprise CX programs rarely fail because no one bought software. They fail because the buying decision ignored the operating model that has to carry the change for the next three years.

A polished demo can hide ugly reality. I have seen contact centers buy a platform that looked strong in a scripted evaluation, then spend the next nine months building workarounds for routing exceptions, audit controls, and reporting gaps that should have been surfaced before contract signature.

Test operating fit, not feature volume

Feature comparisons are easy to score and easy to defend in procurement. They also miss the failure points that show up in production.

The right evaluation asks whether the platform can support your service design under real constraints. That means messy handoffs, fragmented data, limited admin capacity, security review, and supervisors who need the system to work during peak volume without specialist support.

I test five areas before I trust a vendor shortlist:

| Area | What to test |

|---|---|

| Workflow fit | Can the platform support actual routing, escalation, approval, exception handling, and follow-up patterns without custom sprawl? |

| Data fit | How cleanly does it connect to CRM, identity, knowledge, WFM, QA, and back-office systems that already exist? |

| Service model fit | Can the vendor implement and support the solution with the staffing model, skills, and pace your team can sustain? |

| Control fit | Does it support audit trails, role-based access, retention rules, consent handling, and policy enforcement? |

| Adoption fit | Will supervisors, agents, admins, and analysts use it as designed, or will they bypass it within 60 days? |

That last point gets ignored too often. A platform can be technically sound and still fail if team leads cannot coach from it, analysts cannot trust the outputs, or admins need vendor support for every change.

Check buyer readiness before blaming the platform

Some vendor failures are real. Many are buyer failures with a vendor label attached later.

Before selection, review your own readiness in the areas that usually break an implementation:

- Process clarity: Are target workflows documented at the exception level, or is the team expecting the tool to settle unresolved policy questions?

- Data ownership: Who owns customer data quality, taxonomy, duplicate management, and cross-system mapping?

- Admin capacity: Do you have people who can run configuration, testing, release review, and permission changes after go-live?

- Training design: Is enablement tied to real call drivers, role expectations, and supervisor behaviors?

- Change leadership: Do operations leaders know what must change in QA, coaching, scheduling, and escalation management?

AI and automation programs often stall here. The model may work. The workflow around it does not.

Evaluate the vendor you will live with after go-live

The software matters. The delivery model matters just as much.

I want clear answers to questions that expose execution risk early:

- Who owns workflow configuration and integration design?

- How many client-side resources are assumed each week?

- What happens when your process conflicts with the product's default logic?

- How are releases communicated, regression-tested, and approved?

- What support exists for regulated workflows, legal hold, audit review, and access certification?

- Which roadmap items depend on custom work, and which are already in the product?

These questions change the economics of the decision. A lower license price can become the more expensive option if the product needs heavy professional services, custom middleware, or ongoing vendor dependency for simple configuration changes.

When teams need market context, outside comparisons can help define categories and shortlist logic. A list of best customer engagement platforms is useful for orienting the search. It should not replace scenario-based evaluation tied to your own operating requirements.

Use a vendor-neutral process for high-impact decisions

For routing, workforce management, analytics, knowledge, and compliance-sensitive tooling, I prefer a structured selection process with named decision criteria, weighted use cases, and clear approval rights. That reduces the political noise that enters once a favored vendor gets executive attention.

Cloud Tech Gurus supports vendor selection through a practitioner-led, vendor-neutral process. That matters when procurement, IT, security, and operations all need evidence for the same decision but care about different risks.

A disciplined process usually includes four parts.

Scenario-based scoring

Vendors respond to real operating scenarios, including exceptions, transfers, rework, and reporting needs. Generic requirement spreadsheets rarely expose where the product breaks.

Implementation diligence

The team reviews integration assumptions, professional services scope, timeline risk, and client workload. Hidden staffing requirements usually surface at this stage.

Risk review

Security, compliance, business continuity, and admin sustainability get tested before the commercial decision is final. That saves time later and prevents expensive reversals.

Reference validation

References should match your complexity, not just your industry. A five-hundred-seat regulated contact center should not rely on a reference from a simple deployment with a small service desk.

Good vendor evaluation protects the roadmap from demo theater, weak assumptions, and procurement speed. It also prevents a common enterprise mistake. Buying a tool that solves one channel while creating new failure points in governance, support, and total operating cost.

Guide Implementation and Establish Governance

Buying the right tool or approving the right roadmap does not improve the customer experience by itself. Execution does. Many strong strategies weaken at this point. The project team launches the change, hands it to operations, and moves on. Six months later, the workflow has drifted, supervisors are coaching to old behaviors, and no one is sure which measures matter.

Stand up a real governance model

A CX strategy needs a standing operating mechanism, not an occasional steering meeting.

I prefer a cross-functional CX governance council with representation from:

- Operations: contact center, service delivery, back-office leaders

- Technology: enterprise apps, integration, data, security

- Customer channels: digital, self-service, branch or field where relevant

- Risk functions: compliance, legal, privacy, audit

- Enablement teams: QA, training, knowledge, WFM

- Finance or transformation office: for sequencing and funding discipline

This group should own prioritization changes, dependency resolution, issue escalation, and outcome review. It should not be a forum for status narration.

Tie implementation to workflow adoption

Most implementation plans overemphasize technical milestones. They underemphasize behavior change.

A practical rollout plan covers four tracks at once:

| Track | What must happen |

|---|---|

| Configuration | Build routing, desktop, knowledge, analytics, and security settings |

| Process | Update SOPs, escalation paths, exception handling, approvals |

| People | Train agents, supervisors, QA, workforce, and admins by role |

| Measurement | Define launch metrics, stabilization metrics, and post-launch review cadence |

Agents do not adopt a new process because the project team sent a communication. They adopt it when the process is clear, the tooling works, and supervisors reinforce the expected behavior.

Use supervisors as the adoption layer

In enterprise contact centers, frontline supervisors determine whether a CX change sticks.

They need more than awareness. They need:

- examples of what good use looks like

- known failure modes

- coaching guides tied to real interactions

- visibility into where agents are struggling

- authority to escalate defects quickly

Practical rule: If supervisors learn about a CX change at the same time as agents, the rollout is already behind.

I also recommend a formal stabilization period after launch. During that window, review defect logs, frontline feedback, knowledge gaps, and workflow exceptions weekly. Do not force the team to wait for a quarterly review to fix obvious friction.

Keep the business case alive after launch

Customer experience work should still connect to business outcomes after implementation. Otherwise the organization starts treating it as another service initiative with soft value.

That is a mistake because the long-term payoff is substantial. Companies that elevate customer experience from poor to excellent can reduce churn by 75% and nearly triple revenue growth over three years, according to McKinsey, and BCG found that CX leaders achieve 70% higher loyalty (Gainsight). Those outcomes do not come from one launch. They come from sustained operational excellence.

Governance cadence that works

A simple cadence is usually enough if the decision rights are clear:

Weekly during launch and stabilization

Review defects, agent friction, queue impact, transfer issues, and training gaps.

Monthly during active roadmap delivery

Review milestones, dependencies, funding changes, and emerging journey issues.

Quarterly at the executive level

Review outcome trends, portfolio shifts, and major investment decisions.

The point of governance is not bureaucracy. It is continuity. CX improvement fails when no one keeps ownership after implementation.

Mitigate Common CX Strategy Pitfalls

Some CX failures are obvious. Others are subtle and expensive because they look like progress for a while.

The patterns below are the ones I would treat as a pre-mortem before approving any major CX program.

Mistaking survey movement for business improvement

A survey can tell you that customers noticed something. It cannot tell you whether the underlying economics improved.

I have seen teams celebrate score gains while transfer rates, repeat contact, complaint volume, and policy exceptions stayed flat. That usually means the organization improved one moment in the journey while leaving the operating defect in place.

The fix is straightforward. Pair perception metrics with operational and business measures. If the journey is better, you should be able to see it in resolution behavior, avoidable contacts, retention patterns, or service cost.

Launching AI without a hard use case

AI is now easy to buy and easy to overuse.

If the business case is vague, the deployment usually turns into one of two things. Either the tool gets confined to a narrow pilot with no operational relevance, or it gets rolled out too broadly and creates trust, compliance, or workflow issues.

Use cases should be specific. Agent assist for complex policy retrieval is specific. Real-time summarization for an already broken after-call workflow may be useful, but only if downstream case handling is also fixed. Generative knowledge creation can help, but not if no one owns article approval and retirement.

Ignoring the agent experience

Teams still treat agent friction as secondary to customer friction. In practice, they are tightly linked.

When agents toggle between systems, work around bad knowledge, or wait on back-office answers, customers feel the delay and inconsistency. If you want to improve customer experience strategy in a durable way, reduce the work agents have to do to deliver a clean answer.

That means desktop design, process clarity, knowledge quality, and supervisor support belong inside the CX program. They are not side topics.

Key takeaway: Customers rarely experience an “agent issue” or a “system issue.” They experience one broken interaction.

Letting compliance arrive late

This is one of the most common mistakes in regulated industries. Teams define a target journey, shortlist vendors, and only then bring in compliance, privacy, or legal review.

That sequence creates rework.

In finance and healthcare, 70% of firms report that compliance requirements delay CX initiatives by 6 to 12 months. A frequent cause is failing to build compliance-by-design into technology selection and workflow definition from the start (Digital Leadership).

What works better:

- involve risk partners during requirements design

- map disclosures, consent, retention, and audit needs before vendor scoring

- test AI use cases against explainability and approval requirements

- validate role-based access and data segregation early

That is slower at the front end and faster overall.

Assuming omnichannel means adding channels

Adding chat, messaging, or a portal feature does not create an omnichannel experience. It creates more entry points.

A true omnichannel model preserves context, identity, and case continuity across channels. If the customer starts in self-service and ends with a live agent, the handoff should not reset the work. If the branch promises a callback, the center should see that commitment. If digital captures intent, routing should use it.

The mitigation is operational, not rhetorical. Define ownership for cross-channel continuity, build common case visibility, and track where context breaks.

Overloading the roadmap

Many teams try to fix service, sales, digital, AI, analytics, and knowledge in one wave. That usually produces broad activity and weak adoption.

The better approach is to limit active work to the few journey defects with the highest combined customer, operational, and risk impact. A narrower program with clear ownership tends to outperform a larger program with diffuse ambition.

Treating procurement as a late-stage event

If sourcing enters after the business case is built and the tool category is assumed, procurement becomes a gate instead of a design partner. That increases the odds of misaligned requirements and weak contract terms.

Bring procurement in early when platform choice affects implementation model, data rights, support structure, and total operating impact. That is especially important for AI, analytics, and multi-system orchestration work.

Conclusion

The teams that improve CX consistently do not rely on slogans. They run the work like operations.

They diagnose the current state with evidence. They prioritize a roadmap that can survive executive scrutiny. They evaluate technology based on workflow fit, not demo quality. They implement with supervisor-level discipline. Then they govern the work long enough for it to change the experience in a measurable way.

That is the practical standard for any leader trying to improve customer experience strategy in a complex contact center. Not more activity. Better sequencing, clearer ownership, and stronger operational follow-through.

If you run a large service organization, you already know the hard part is not identifying what customers want. The hard part is building an environment where the organization can deliver it consistently across channels, systems, and teams.

That is why CX strategy has to be treated as an operating discipline. When it is, the work becomes easier to fund, easier to implement, and much harder to derail.

Cloud Tech Gurus provides vendor-neutral, practitioner-led consulting for contact center and CX leaders. Learn more at cloudtechgurus.com.