Most advice on customer experience in call center operations is too soft and too narrow. It treats CX as an agent behavior issue, a script issue, or a software issue. That is usually why the program stalls.

A friendlier tone does not fix a broken routing model. Better QA forms do not help if customers repeat the same information across IVR, chat, and voice. A new CCaaS platform does not improve experience if finance still rewards handle time, operations still rewards occupancy, and digital teams still own self-service in a separate silo.

The common failure pattern is simple. Leaders launch a CX initiative as a side program while the operating model stays intact. The center still measures speed more heavily than resolution. Product teams still do not see call reasons in time to act. Workforce teams still plan for efficiency first and recovery second. Agents still carry the burden of process design flaws they did not create.

That is why many customer experience efforts look busy but produce little. The organization upgrades channels, adds dashboards, and retrains supervisors, yet customers feel the same friction. Sometimes they feel more of it because more technology means more handoffs, more alerts, and more complexity behind the curtain.

The business stakes are not abstract. Qualtrics reports that consumers are 2.6x more likely to purchase more when wait times are satisfactory, 53% cut spending after a bad experience, and companies prioritizing CX see 41% faster revenue growth (Qualtrics contact center trends). That is not a branding exercise. It is an operating discipline with direct financial consequences.

The leaders who get this right stop asking, “How do we make calls nicer?” They ask harder questions.

Which moments create avoidable effort? Which policies force transfers? Which metrics are rewarding the wrong behavior? Which technologies reduce friction, and which are just adding another layer to manage?

Introduction Why CX Programs Keep Failing

Most failed CX programs have one thing in common. They try to improve perception without fixing execution.

In practice, customer experience in call center environments breaks down when leaders separate service quality from operating design. The frontline gets coaching on empathy while the customer still sits in a queue, enters an account number twice, gets routed to the wrong team, and hears, “Let me transfer you.”

That is not a training problem. It is a systems problem.

The usual bad assumptions

Leaders often inherit one of these assumptions:

- Better scripts will improve CX: Scripts can improve consistency, but they cannot compensate for poor knowledge access, weak routing, or rigid policies.

- A new platform will solve the issue: Platforms matter. Integration, workflow design, and governance matter more.

- CSAT alone tells the story: Satisfaction scores are useful, but they rarely explain where effort was created or why customers had to call in the first place.

- AHT is a safe efficiency metric: AHT is easy to report and easy to misuse. Teams that overmanage it often push agents to end calls before the customer’s issue is closed.

The result is predictable. The center gets operationally tighter on paper and worse for the customer in practice.

What causes the failure

Three conditions show up again and again.

First, metrics conflict. Operations pushes speed. Customer teams push satisfaction. Finance pushes labor control. No one owns the trade-offs.

Second, data sits in functional silos. Voice data, CRM history, digital interactions, WFM trends, and QA findings rarely land in one decision loop. Leaders think they have visibility because they have reports. They do not have visibility if they cannot connect cause to outcome.

Third, technology gets deployed without workflow redesign. New AI tools, analytics platforms, and automation layers often get added to legacy processes instead of replacing bad ones.

If the process still requires the customer to compensate for internal complexity, the experience has not improved. It has only been digitized.

Strong CX programs start when leadership treats the contact center as an operational engine, not a service facade. That means fewer cosmetic fixes and more structural decisions.

Defining True Customer Experience in the Call Center

True customer experience in the call center is broader than the call itself. It includes what the customer had to do before reaching you, what happened during the interaction, and whether the problem stayed fixed after the conversation ended.

That sounds obvious. It rarely shows up in how centers are run.

A lot of teams still define CX too narrowly. They focus on agent courtesy, script compliance, and survey scores after the call. Those matter, but they sit at the end of a chain of upstream decisions about routing, staffing, channel design, knowledge management, policy, and case ownership. If those decisions are wrong, the agent inherits the failure and the customer feels the cost.

Customers usually judge the experience through three filters.

| CX dimension | What the customer is really asking | What leaders should inspect |

|---|---|---|

| Effort | How hard was this to get done? | Wait time, transfers, repeats, authentication friction, channel switching |

| Emotion | How did this interaction make me feel? | Tone, empathy, confidence, ownership, clarity |

| Outcome | Was my issue resolved? | First contact resolution, next-step completion, callback reliability, case closure quality |

The order matters. A customer who has repeated the issue three times is already frustrated before the agent says hello. A warm tone can steady the interaction, but it does not erase avoidable effort. Resolution still carries the most weight. If the problem comes back tomorrow, the experience was poor, even if the survey response looked fine on the day of contact.

This is why CX should be treated as an operating model, not a service aspiration. The center has to align channel design, policies, workforce planning, desktop tools, QA standards, and handoffs across functions. If even one of those breaks, the customer experiences the organization as disjointed. Leaders may see separate teams. Customers see one company that made them work too hard.

That makes CX a business control issue. Poor experience raises repeat contacts, rework, escalations, and avoidable concessions. Good experience lowers customer effort and removes friction from the operation itself. The financial effect usually shows up first in volume and labor before it shows up in brand tracking.

Leaders also miss where the experience is being created. It often has little to do with the agent’s skill alone. Finance creates confusion in billing. Digital teams create dead ends in self-service. Product creates onboarding gaps. Operations then asks the contact center to absorb the fallout while still hitting service level and handle time targets. That is one reason technology investments disappoint. New tools get added, but ownership for the root cause stays fragmented.

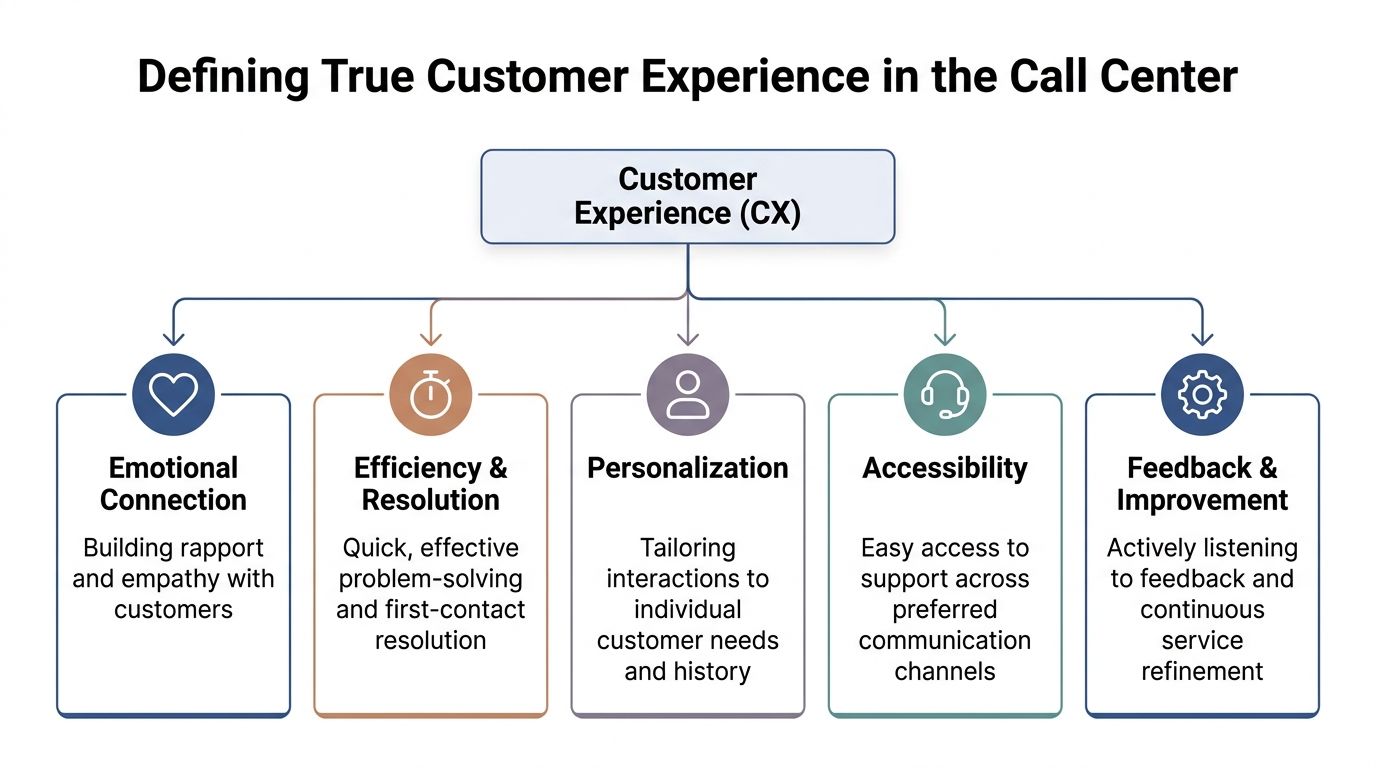

In practice, strong customer experience in call center operations comes down to five components:

- Accessibility: Customers can reach the right channel and the right queue without unnecessary friction.

- Efficiency and resolution: The issue gets handled without avoidable delay, transfers, or repeat work.

- Personalization: The agent has enough context to continue the journey instead of restarting it.

- Emotional connection: The customer feels heard, respected, and guided through the next step.

- Feedback and improvement: Contact reasons, failure patterns, and workflow defects get routed back to the teams that can fix them.

I learned this the hard way. Centers improve faster when every contact is treated as both a service interaction and evidence of an operational defect, a policy gap, or a process that needs redesign.

That is what true CX means in a call center. The experience is only as good as the organization behind the agent.

Mapping the Moments That Define the Journey

Most leaders know their metrics. Fewer can walk the journey the way a customer experiences it.

That difference matters because customer experience in call center environments is shaped by a sequence of moments, not a single conversation. If one of those moments goes wrong early, the agent starts the interaction in recovery mode.

Zoom notes a clear expectation gap. Nearly 80% of customers expect short wait times, but that happens only about 60% of the time, and 76% of consumers become frustrated when personalized, efficient service does not materialize (Zoom call center statistics). That gap shows up across the journey, not just in the queue.

Moment one, the need to contact you at all

The first question is not how the call went. It is why the customer had to call.

Some contacts are high value and necessary. Others are failure demand. Billing confusion, order status ambiguity, identity verification loops, broken self-service, and conflicting messages from digital channels all create avoidable volume.

When leaders skip this step, they optimize the handling of demand they should have prevented.

Moment two, entry and routing

At this stage, many centers lose the customer before the agent ever speaks.

Common friction points include:

- Long or confusing IVR trees: Customers do not want to decode your org chart.

- Poor intent capture: “Press 3 for something close enough” creates misroutes.

- Reauthentication: Asking for the same details repeatedly signals disorganization.

- Channel breakage: A customer starts in chat, moves to voice, and the context disappears.

A clean entry experience reduces both effort and emotional friction. A messy one makes every downstream metric harder to improve.

Moment three, the live interaction

Once the call reaches an agent, three things matter more than any script.

The agent needs context, authority, and a path to resolution.

Without context, the customer repeats themselves. Without authority, the issue escalates. Without a path, the agent improvises around process gaps and hopes the case lands with the right back-office team.

Often, many centers overfocus on courtesy language and underinvest in knowledge access, workflow design, and case ownership.

The customer rarely remembers your internal handoff logic. They remember whether someone took responsibility.

Moment four, post-call follow-through

A surprising amount of CX damage happens after the call.

The center promises a callback that never comes. A replacement is processed but not confirmed. A case note is too thin for the next team to act on. The customer gets an automated survey before the issue is complete.

These failures are especially costly because the customer believed the interaction was over.

A practical audit lens

Walk five recent call types and inspect each stage using these questions:

- What triggered the contact?

- Where did the customer expend avoidable effort?

- Where did context get lost?

- Did the first agent have what they needed to resolve?

- Was the next step explicit, owned, and completed?

That exercise usually surfaces more truth than a monthly scorecard. The friction is usually obvious once leaders stop reading the journey as a set of departmental metrics and start reading it as a single customer event.

How to Measure What Matters

If you still run CX with an efficiency dashboard plus a survey score, you are not measuring customer experience. You are measuring fragments.

The issue is not that traditional metrics are useless. The issue is that many centers overweight them because they are easy to extract from the platform. Average handle time, speed of answer, and abandonment all tell you something. None of them tell you whether the customer’s problem was solved with acceptable effort.

Why the usual dashboard misleads

AHT is the clearest example. Lower AHT can reflect strong execution. It can also reflect rushed calls, avoidable callbacks, shallow troubleshooting, or premature transfers.

Abandonment has the same problem. A lower abandonment rate can mean your staffing model improved. It can also mean customers stayed trapped in queue because they had no alternative.

That is why I prefer a balanced scorecard built around three lenses.

| Lens | Core question | Useful measures |

|---|---|---|

| Outcome | Did we solve it? | FCR, case closure quality, repeat contact patterns |

| Effort | How hard was it? | Transfer rate, repeat authentication, customer effort indicators |

| Perception | How did the customer experience it? | CSAT, NPS, open-text feedback, sentiment trends |

No single metric should carry the entire story. The value comes from reading them together.

FCR matters more than many teams admit

First contact resolution is one of the strongest practical indicators of CX health because it sits at the intersection of process, training, routing, and authority.

If FCR is weak, the problem is rarely just agent performance. It often points to poor intent capture, weak knowledge management, fragmented systems, or policy barriers that force unnecessary handoffs.

A good QA approach should reflect that. If your QA form grades courtesy and compliance while missing whether the customer had to come back, the scorecard is too narrow. The same issue shows up in many sampling-based programs. Only reviewing a small slice of interactions limits what supervisors can see. This is why broader quality visibility matters, and why many leaders are rethinking approaches like traditional call sampling in QA programs.

Sentiment is finally operational, not just observational

For years, leaders treated sentiment as interesting but secondary. That has changed.

AI-powered sentiment analysis can monitor language patterns and tone during the interaction, flag frustrated customers in real time, and help agents adjust before the call deteriorates. It also gives supervisors and CX leaders a way to connect recurring sentiment shifts to product issues, training gaps, or broken workflows (Call Center Studio on AI and automation in CX).

That is useful because survey data is always partial. Many customers never respond. The ones who do often represent the extremes. Sentiment analysis gives you another layer of evidence inside the live interaction itself.

What to stop doing

A few measurement habits create more confusion than insight:

- Scoring channels separately without a unified journey view: The customer experiences one journey, not your reporting structure.

- Using QA as a compliance-only tool: Compliance matters, but it is not the same as experience quality.

- Chasing vanity improvements: Faster answer times look good in decks. They do not guarantee lower customer effort.

- Reporting without diagnosis: A dashboard that shows decline without exposing root cause is only half a management tool.

The best measurement model is not the one with the most KPIs. It is the one that helps leaders decide what to fix next.

The Technology Stack That Enables Modern CX

A modern CX stack should reduce effort for customers and complexity for employees. Too often it does the opposite. Leaders buy point solutions for routing, QA, AI assistance, workforce planning, and analytics, then spend the next year trying to stitch them into a coherent operating model.

The stack only works when each layer has a clear job.

The foundation is still the interaction platform

The base layer is your CCaaS platform. It handles voice, digital channels, queue logic, routing, recording, reporting, and increasingly native AI capabilities.

That does not mean the platform should do everything. It means the platform has to orchestrate cleanly with CRM, knowledge, identity, case management, WFM, QA, and analytics. If the core platform cannot support clean data flow and context transfer, every CX improvement above it gets harder.

If your team is evaluating options, a broad overview of omnichannel contact center solutions is a practical place to compare architecture choices before you get pulled into feature demos.

AI belongs in routing, assistance, and automation

The best AI use cases are usually the least theatrical.

Intent-based agent matching is one of them. When AI analyzes incoming intent and sentiment and routes the contact to the best-matched agent, first contact resolution improves because the customer gets to someone equipped to handle the issue on the first attempt (intent-based agent matching and FCR). That is a better use of AI than adding a bot because the vendor demo looked polished.

The same applies to agent assist. Good tools help with knowledge retrieval, next-best-action prompts, note summarization, and real-time compliance guidance. Bad implementations flood the desktop with suggestions no one trusts.

For leaders comparing platforms and workflow tools, this guide to inbound call center software solutions is useful because it frames software choices around call handling realities, not just interface claims.

WFM and QA are part of CX, not back-office support tools

Many teams still treat workforce management as a labor control function. That is incomplete.

WFM decisions shape queue times, schedule adherence pressure, break timing, and staffing coverage for complex contact types. Those are customer experience variables.

QA is similar. If QA only checks compliance language and script adherence, it will not tell you whether agents are creating confidence, preserving context, and driving resolution. Modern QA needs conversation intelligence, thematic analysis, and better calibration across operations and risk teams.

A useful technology walkthrough on this topic is below.

What a healthy stack looks like in practice

A strong stack does a few things reliably:

- Preserves context: The agent sees prior interactions, case history, and channel transitions.

- Improves routing quality: Customers reach the best available team or specialist with less triage.

- Supports the agent in real time: Knowledge and guidance appear when needed, not after the fact.

- Feeds continuous improvement: Interaction data informs training, product fixes, and process redesign.

- Stays governable: Security, permissions, auditability, and compliance are built into the workflow.

What does not work is stacking tools faster than the organization can absorb them. When leaders add AI, a new QA layer, and a CRM overlay at the same time, adoption usually lags and frontline confidence drops.

The stack should serve the operating model. Not the other way around.

Navigating Common Transformation Challenges

CX transformation usually breaks down long after the contract is signed.

The failure point is the operating model. Teams buy platforms to fix customer pain, then keep the same incentives, approval paths, ownership gaps, and frontline workflows that created the pain in the first place. The software goes live. The experience barely moves.

Data silos keep the center reactive

Siloed data is rarely a reporting problem alone. It is an accountability problem.

Operations sees handle time and transfer rates. Digital teams see containment. Product sees defect trends on a lag. QA sees fragments of conversations. WFM sees staffing pressure without a clear view of why volume is rising. Each team can defend its own numbers while the customer experiences one broken journey.

That setup keeps the center in triage mode. Leaders spend time explaining spikes, backlog, and repeat contacts instead of removing the process failures that created them.

The practical issue is ownership. The contact center cannot fix a broken refund policy, unclear digital copy, or an identity step that fails half the time. If product, digital, compliance, and operations do not share decisions, the center becomes the cleanup function for everyone else.

Misaligned KPIs train the wrong behavior

Poor CX often comes from rational behavior inside a bad scorecard.

If finance pushes labor efficiency, operations pushes speed, QA pushes compliance language, and the executive team asks for better loyalty, supervisors end up managing contradictions. Agents feel those contradictions in real time. They are told to slow down for empathy, speed up for service level, avoid transfers, and still stay inside narrow policy rules.

That tension produces predictable outcomes. Agents rush calls that needed clarity. Supervisors coach to whatever metric is under the most pressure that week. Repeat contacts rise, and leaders call it an execution problem.

It is a design problem.

I have seen centers spend heavily on desktop tools and AI guidance while leaving comp plans and manager scorecards untouched. In that environment, new technology just helps teams do the wrong thing faster.

Poor AI governance adds friction instead of removing it

AI can reduce effort. It can also add a new layer of operational drag.

The common mistake is treating AI as a monitoring system before treating it as workflow support. If agents get more alerts, more scorecards, and more post-interaction scrutiny without better knowledge access, cleaner workflows, or fewer manual steps, the technology raises pressure instead of improving service.

Customers hear that quickly. Agents become hesitant. They follow prompts too strictly. Judgment drops. Calls sound flatter, and complex issues take longer to resolve because nobody wants to deviate from the system's suggestion.

The trade-off is straightforward. Oversight has value in regulated or high-risk environments. Too much surveillance, applied too early, weakens confidence on the frontline. Leaders need clear rules for where AI assists, where it recommends, and where a human still owns the decision.

AI should remove effort from the interaction. If it mainly increases monitoring, agents become less adaptive and customers get a thinner version of service.

Compliance and complexity expose weak ownership fast

Complex operations do not tolerate vague process control.

In regulated environments, small inconsistencies become material risk. One team verifies identity one way. Another documents exceptions differently. A third uses informal escalation paths because the official process is too slow. On paper, the workflow exists. In practice, it depends on local habits and experienced managers.

That is where many transformation programs stall. Leaders compare platforms, debate features, and negotiate pricing while the harder work sits untouched. Who owns policy interpretation? Who signs off on workflow changes? Who decides whether a control can be automated? Who is accountable when the digital journey and assisted journey produce different outcomes?

Those decisions shape CX more than feature lists do.

The practical response

Teams that make progress usually keep the response simple and disciplined:

- Create one decision forum with real authority: Operations, IT, digital, compliance, and product need shared decisions, not occasional check-ins.

- Set a clear metric hierarchy: Teams need to know which outcome wins when speed, quality, cost, and risk conflict.

- Test changes in live workflows before broad rollout: Supervisor input and frontline observation catch failure points that project teams miss.

- Track agent friction with the same seriousness as customer friction: Confusion, extra clicks, and conflicting guidance show up in calls before they show up in attrition data.

Technology can support better CX. It does not create alignment, process discipline, or shared accountability on its own. Those are management choices.

A Pragmatic Roadmap for Improving CX

Most centers do not need a dramatic reset. They need a sequence.

Trying to redesign journeys, replace platforms, deploy AI, rewrite QA, and retrain the frontline all at once usually creates confusion. A practical roadmap works better because it forces decisions in the right order.

Start with the current-state reality

Do not begin with vendor demos. Begin with operational evidence.

Pull a set of high-friction contact types. Review journey steps, routing paths, repeat contact patterns, transfer behavior, and post-call failure points. Listen to calls with supervisors, not just analysts. Compare what leaders think happens with what the customer experiences.

This first phase should answer a few blunt questions:

- Where is effort being created?

- Which contact types are most avoidable?

- Where does resolution break down?

- Which systems or policies force agents into workarounds?

If you skip this step, you risk buying technology for the wrong problem.

Align goals before changing tools

The second phase is governance.

Decide what the center is optimizing for, and in what order. If leadership says customer experience matters most but comp plans and scorecards still favor speed above all else, the initiative will drift back to old behavior.

A useful alignment model includes:

| Area | Decision leaders need to make |

|---|---|

| Customer outcome | What does a successful resolution mean? |

| Metric priority | When efficiency and experience conflict, which metric carries more weight? |

| Ownership | Which teams own journey fixes outside the contact center? |

| Escalation design | Who can approve policy or workflow changes quickly? |

This stage is less visible than a platform rollout. It is more important.

Modernize in phases, not in one motion

Technology change should follow the operational design, not replace it.

A practical sequence often looks like this:

- Fix routing and knowledge access first: Customers benefit quickly when the first agent has a better chance of resolving the issue.

- Address desktop friction next: Reduce swivel-chair work and broken handoffs between systems.

- Layer in analytics and AI assistance carefully: Start with use cases that support resolution and coaching.

- Expand automation where it removes effort: Do not automate failure. Remove the broken step first.

For teams building an AI adoption plan, this piece on automating customer support with generative AI is a useful reference because it keeps the focus on workflow fit instead of feature excitement.

Use tougher vendor selection criteria

Feature lists are not enough. Most vendors can demonstrate routing, dashboards, bots, and agent assist.

Harder questions include:

- Integration reality: How cleanly does the platform connect to your CRM, identity tools, knowledge base, and case workflows?

- Operational fit: Can supervisors and analysts manage it without heavy vendor dependence?

- Governance: Can compliance, QA, and IT enforce controls without slowing every change request?

- Flexibility: Can the solution support different lines of business, customer segments, and escalation models?

- Adoption burden: How much training and desktop change will the frontline absorb?

Too many buying teams score vendors on features they will rarely use and underweight the workflow issues that will shape adoption on day one.

The best vendor is rarely the one with the longest roadmap. It is the one your operation can implement, govern, and improve with discipline.

Treat optimization as permanent work

CX improvement is not a project with a finish line.

Once the first changes are live, leaders need a standing loop that connects interaction data, survey feedback, quality trends, and frontline input to process changes. Product, digital, and policy teams should be inside that loop, not outside it.

The centers that improve steadily are not the ones with the flashiest stack. They are the ones that build a management rhythm around identifying friction, testing fixes, and scaling what works.

Conclusion The Shift to an Operational Discipline

Customer experience in call center operations improves when leaders stop treating it as a soft initiative and start managing it as an operating system.

That means defining CX through effort, emotion, and outcome. It means mapping the moments that create friction, measuring more than speed, and using technology to reduce complexity rather than add it. It means dealing with the parts vendors rarely dwell on, including data silos, conflicting KPIs, frontline adoption strain, and the risk of overmonitoring agents.

If you want a useful outside perspective on where automation fits into this work, this overview of automated customer experience improvement adds practical context.

The centers that improve customer experience consistently are not chasing trends. They build alignment, fix workflows, and keep learning from the operation itself.

Cloud Tech Gurus provides vendor-neutral, practitioner-led consulting for contact center and CX leaders. Learn more at cloudtechgurus.com.